How To Make A Web Crawler In Python in June, 2026

Want to create a web crawler in Python? These tutorials go over how to create Python crawlers and give in-depth web crawler Python code.

Whether you are looking to obtain data from a website, track changes on the internet, or use a website API, website crawlers are a great way to get the data you need. While they have many components, crawlers fundamentally use a simple process: download the raw data, process and extract it, and, if desired, store the data in a file or database. There are many ways to do this, and many languages you can build your spider or crawler in.

Python is an easy-to-use scripting language, with many libraries and add-ons for making programs, including website crawlers. These tutorials use Python as the primary language for development, and many use libraries that can be integrated with Python to more easily build the final product.

Web Scraping with Selenium and Python in 2023

This tutorial goes over how to perform headless browser crawling using Python to control Selenium and Chrome with webdriver. It also goes over some more advanced topics like waiting for Javascript elements to appear, as well as data extraction work.

Web Scraping with Scrapy: Python Tutorial

This tutorial examines how to install and use Scrapy to crawl an example bookstore on a sample site. It’s well presented and also includes how to export the data.

Python Web Scraping to CSV

This is a really quick tutorial on how to download and extract data from a page with Python, and then export it to a csv file.

Web crawling with Python

The tutorial covers different web crawling strategies and use cases and explains how to build a simple web crawler from scratch in Python using Requests and Beautiful Soup. It also covers the benefits of using a web crawling framework like Scrapy.

The tutorial then goes on to show how to build an example crawler with Scrapy to collect film metadata from IMDb and how Scrapy can be scaled to websites with several million pages.

The tutorial is well-written, easy to follow, and provides practical examples that you can use to develop their own web crawlers.

Web Crawling in Python

These tutorials by Jason Brownlee give 3 examples of crawlers in Python: downloading pages using the requests library, extracting data using Pandas, and using Selenium to download dynamic Javascript-dependent data.

Making Web Crawlers Using Scrapy for Python

This tutorial guides you through the process of building a web scraper for AliExpress.com. It also provides a comparison between Scrapy and BeautifulSoup.

The tutorial includes step-by-step instructions for installing Scrapy, using the Scrapy Shell to test assumptions about website behavior, and using CSS selectors and XPath for data extraction.

The tutorial concludes with a demonstration of how to create a custom spider for a Scrapy project to scrape data from AliExpress.com.

Web Crawler In Python

This python tutorial uses the requests library to download pages in Python and the beautifulSoup4 library to handle parsing and extracting data from the downloaded HTML pages.

Build a Python Web Crawler From Scratch

Bekhruz Tuychiev takes you through the basics of HTML DOM structure and XPath, showing you how to locate specific elements on a web page and extract data from them.

The tutorial also includes a practical example of scraping an online store’s computer section and storing the extracted data in a custom class.

Additionally, the author demonstrates how to handle pagination and scrape data from multiple pages using the same XPath syntax.

Python Web Scraping Tutorial

This tutorial shows how to use the requests and Beautiful Soup libraries to download pages and parse them.

How To Crawl A Web Page with Scrapy and Python 3

This tutorial by Justin Duke on the Digital Ocean community section gives a tutorial on how to extract quotes from a webpage using the Scrapy library.

How to make a Web Crawler in under 50 lines of Python code

This is a tutorial made by Stephen from Net Instructions on how to make a web crawler using Python.

A Basic 12 Line Website Crawler in Python

This is a tutorial made by Mr Falkreath about creating a basic website crawler in Python using 12 lines of Python code. This includes explanations of the logic behind the crawler and how to create the Python code.

Scraping Web Pages with Scrapy – Michael Herman

This is a tutorial posted by Michael Herman about crawling web pages with Scrapy using Python using the Scrapy library. This include code for the central item class, the spider code that performs the downloading, and about storing the data once is obtained.

Build a Python Web Crawler with Scrapy – DevX

This is a tutorial made by Alessandro Zanni on how to build a Python-based web crawler using the Scrapy library. This includes describing the tools that are needed, the installation process for python, and scraper code, and the testing portion.

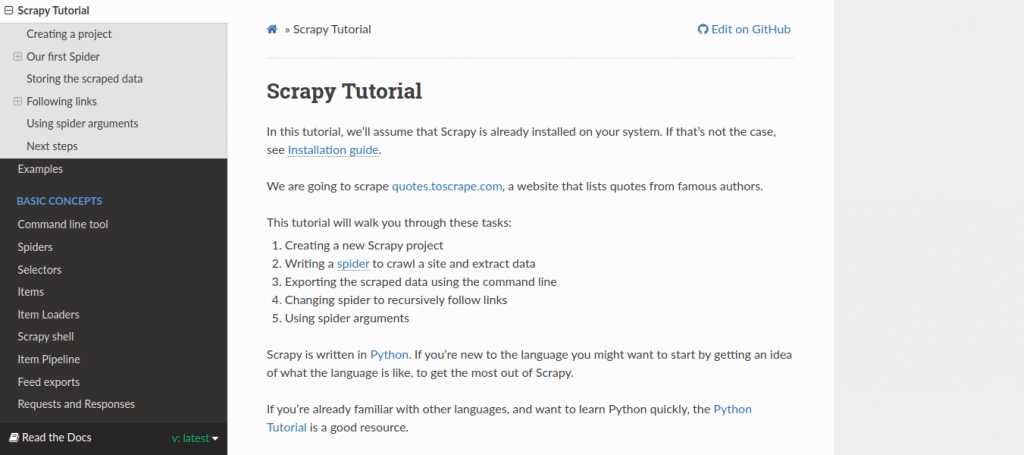

Scrapy Tutorial — Scrapy Documentation

This is an official tutorial for building a web crawler using the Scrapy library, written in Python. The tutorial walks through the tasks of: creating a project, defining the item for the class holding the Scrapy object, and writing a spider including downloading pages, extracting information, and storing it.

Web Scraping with Scrapy and MongoDB – Real Python

This is a tutorial published on Real Python about building a web crawler using Python, Scrapy, and MongoDB. This provides instruction on installing the Scrapy library and PyMongo for use with the MongoDB database; creating the spider; extracting the data; and storing the data in the MongoDB database.

Web Scraping with Scrapy and MongoDB Part 2 – Real Python

This is a tutorial published on Real Python is a continuation of their previous tutorial on using Python, Scrapy, and MongoDB. It includes additional features including a download delay (very important).

A quick introduction to web crawling using Scrapy

This is a tutorial made by Xiaohan Zeng about building a website crawler using Python and the Scrapy library. This include steps for installation, initializing the Scrapy project, defining the data structure for temporarily storing the extracted data, defining the crawler object, and crawling the web and storing the data in JSON files.

Installing and using Scrapy web crawler to search text on multiple sites

This is a tutorial about using the Scrapy library to build a Python-based web crawler. This include code for generating a new Scrapy project and a simple sample Python crawler calling functions from the Scrapy library.

Scraping iTunes Charts Using Scrapy Python

This is a tutorial made by Virendra Rajput about the building a Python-based data scraper using the Scrapy library. This include instructions for the installation of scrapy and code for building the crawler to extract iTunes charts data and store it using JSON.

Web Scraping

This is a tutorial about web scraping using Python and Scrapy. This include codes for scraping with a known page, scraping generated links, and scraping arbitrary websites.

Scrapy-Cluster

Scrapy-cluster is a Scrapy-based project, written in Python, for distributing Scrapy crawlers across a cluster of computers. It combines Scrapy for performing the crawling, as well as Kafka Monitor and Redis Monitor for cluster gateway/management. It was released as part of the DARPA Memex program for search engine development.